Facebook has announced an upgrade to its suicide prevention AI in order to respond and report about the users who are expressing thoughts of suicide either via posts or live videos.

The company has always tried to connect a person in distress with people who can support them in an effort to build a community that is safe on and off Facebook.

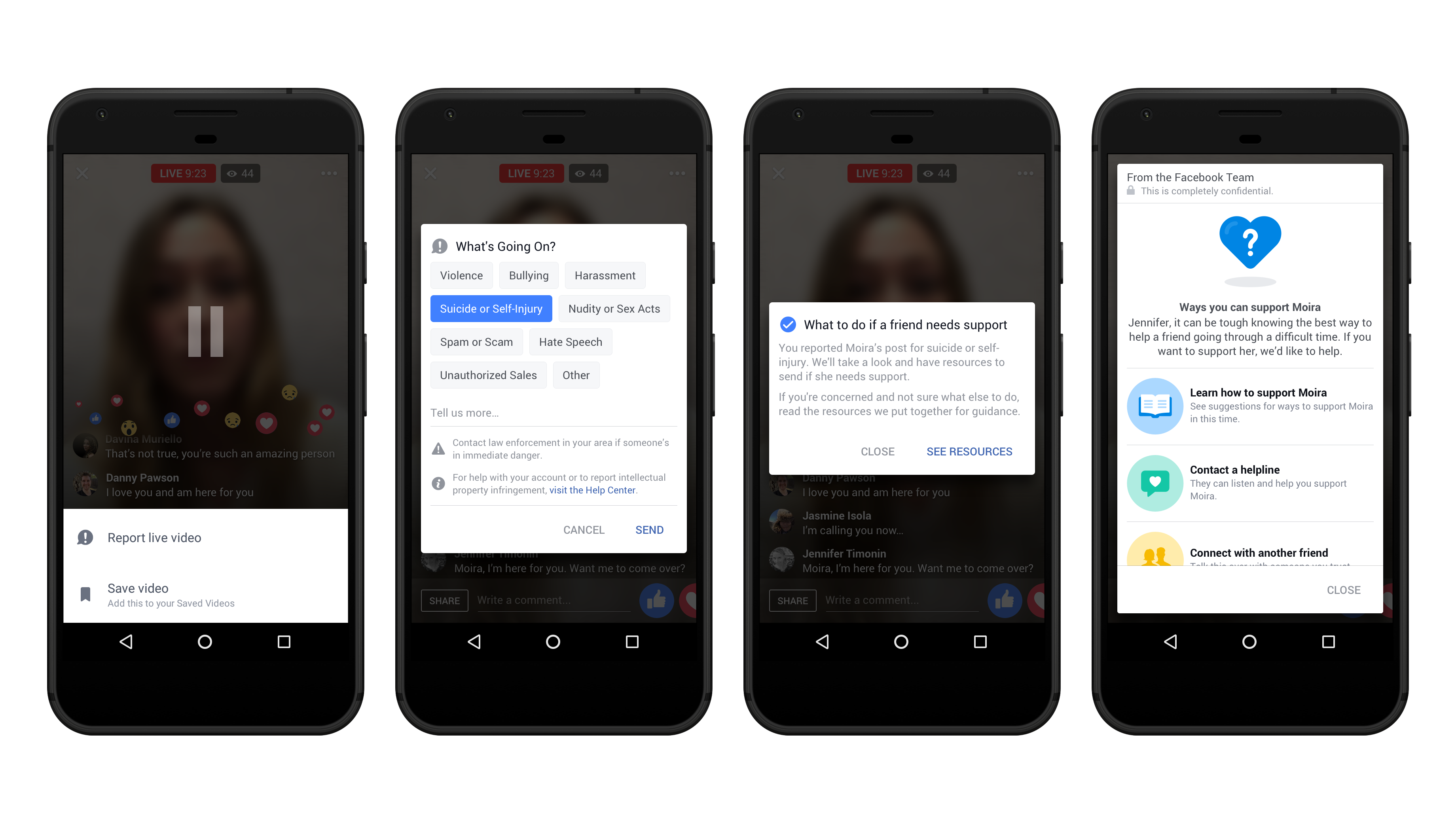

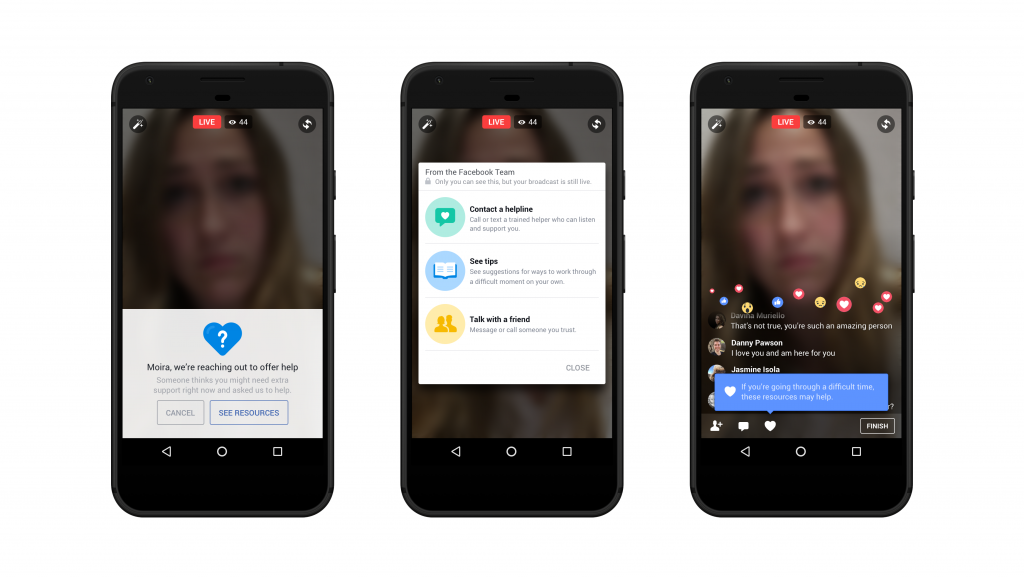

Facebook uses pattern recognition to detect posts or live videos where a user might be expressing thoughts of suicide in order to report and respond and has also improved on how to identify appropriate first responders. The company is expanding the dedicated reviewers from the Community operations team to review reports of suicide or self-harm.

Facebook’s AI is helpful for reducing response times to at-risk users, prioritizing content which it deems especially worrisome so human moderators can review them and take the most appropriate action, depending on the level of risk.

“We provide people with a number of support options, such as the option to reach out to a friend and even offer suggested text templates. We also suggest contacting a helpline and offer other tips and resources for people to help themselves in that moment.” Facebook’s Guy Rosen says.

This, however, won’t roll out across Europe because the General Data Protection Regulation privacy laws in the EU make use of such technology rather complicated in the EU.

[related-posts]