There is a quiet arms race unfolding inside the servers of every major media platform on the internet, and most people have no idea it’s happening. On one side: artificial intelligence tools that can generate photorealistic video from a single text prompt, conjuring people, places, and events that never existed. On the other: a decades-old system of digital identification called fingerprinting video — the technology that platforms, broadcasters, and rights holders use to recognize content the moment it appears online. The collision between these two forces is reshaping copyright law, content security, and the very definition of visual truth. To understand what’s at stake, it helps to start with what a fingerprint actually is — and why generative AI is forcing engineers to rethink everything they built.

What a Video Fingerprint Actually Does

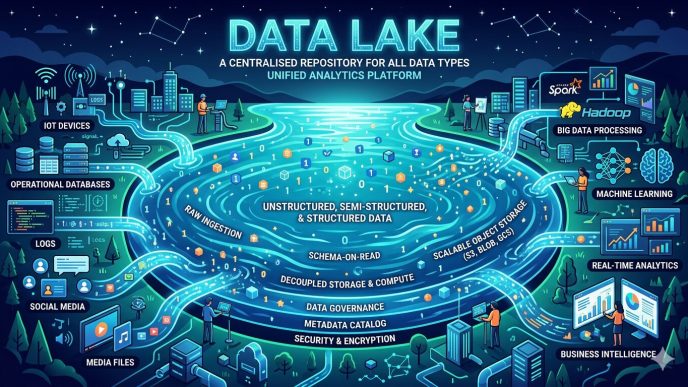

Video fingerprinting is a form of digital identification built on a deceptively simple premise: every piece of video content, like every human fingerprint, has a unique signature. The technology works by analyzing a video at the pixel and frame level, extracting mathematical descriptors that capture the content’s distinctive visual and audio characteristics. These descriptors — called a video fingerprint — are then stored in a reference database. When any new video appears on a platform, the system generates its own fingerprint and checks it against that database. A match means the content has been identified.

The process happens in milliseconds at industrial scale. YouTube’s Content ID system, arguably the most famous example of this technology in action, scans every upload against a database containing millions of reference files submitted by rights holders — studios, record labels, sports leagues, news organizations. When it finds a match, the rights holder can choose to block the video, monetize it, or simply track its viewership. The system has generated billions of dollars in royalty payments since its launch. Alongside Content ID, the broader ecosystem of best video fingerprinting software includes platforms like Audible Magic, WebKyte, TECXIPIO, and nablet — each serving different segments of the market from broadcast monitoring to social media rights management.

The Generative Disruption

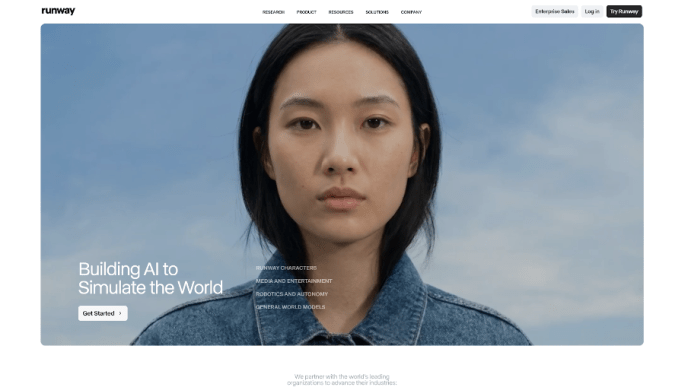

Then came tools like OpenAI’s Sora, Runway ML, Kling, and a dozen competing platforms capable of producing high-definition synthetic video on demand. These systems don’t copy existing content — they synthesize entirely new visual sequences from scratch, trained on vast libraries of real-world footage. A user can type “a cinematic shot of a woman walking through a rainy Tokyo street at night” and receive a polished, photorealistic clip within seconds. No original footage was used. No existing fingerprint exists. The database has nothing to match against.

This is the foundational challenge that generative video poses to classical fingerprint video systems: they were designed to find needles they already knew about in a haystack. Synthetic content is a needle that was never catalogued.

The problem compounds when bad actors get involved. In late 2025, cybersecurity firm Reality Defender conducted a controlled experiment against OpenAI’s Sora 2 platform, which had launched an identity verification feature designed to prevent impersonation. The researchers collected publicly available footage of prominent executives and celebrities, built real-time deepfake systems that synchronized synthetic faces to live operator movements, and submitted these fabricated identities through the platform’s verification process. Every attempt succeeded. Sora’s own platform detected nothing. Reality Defender’s independent detection API, meanwhile, flagged every single fake with over 95% confidence. The experiment revealed a damning structural weakness: generative AI platforms cannot reliably police their own outputs, because their detection systems are trained on the same data distributions they generate.

How Detection Works — and Where It Breaks Down

Modern AI-based detection doesn’t rely on database matching alone. It looks for artifacts — the microscopic imperfections that generative models leave behind in their outputs. Each model has its own characteristic signature: Sora tends to produce specific motion blur patterns under rapid movement; Runway Gen-4 leaves subtle inconsistencies in how light behaves across frame transitions; diffusion-based models often generate slight temporal flickering invisible to the naked eye but detectable by trained algorithms.

This approach, sometimes called AI model fingerprinting, can achieve 90–95% accuracy when identifying content from known, well-studied generation systems. The catch is the word “known.” Detection systems that perform brilliantly against today’s models face a constant obsolescence problem — every new model version, every fine-tuned variant, every custom-trained derivative produces a slightly different artifact profile. Researchers at Binghamton University, working on a DARPA-funded grant announced in late 2025, described the challenge bluntly: detection technology is perpetually chasing a moving target, and the gap between generative capability and detection accuracy widens every time a new model ships.

Deepfake fraud attempts at financial institutions surged 2,137% between 2024 and 2025, with average losses reaching $500,000 per incident — numbers that illustrate precisely why the stakes extend far beyond copyright management into identity fraud, financial crime, and geopolitical manipulation.

The Watermarking Counter-Strategy

Faced with the limitations of reactive detection, the industry is increasingly betting on a proactive alternative: embedding identification into AI-generated content at the moment of creation, before it ever reaches the public.

The leading framework for this approach is the C2PA standard — the Coalition for Content Provenance and Authenticity, backed by Adobe, Microsoft, Google, Sony, and dozens of other technology and media companies. C2PA works by attaching a cryptographically signed “provenance manifest” to a video file at the point of creation. This manifest functions like a nutrition label for digital media: it records which tools were used, what edits were made, and whether any AI was involved in generating the content. The signature travels with the file as it moves across platforms, and any compatible verification tool can read it.

The approach has gained significant regulatory momentum. California’s AI Transparency Act took full effect in January 2026, requiring disclosure of AI-generated content in specified contexts. The EU AI Act’s watermarking mandates are scheduled to come into force in August 2026, making C2PA-compatible provenance signals a compliance requirement across the European market rather than a voluntary industry standard. YouTube, Meta, and TikTok have all begun requiring AI disclosure labels on synthetic content submitted for advertising purposes, with de-ranking and ad disapproval penalties for non-compliance.

ONVIF, the global standards body for video surveillance technology, announced a formal partnership with C2PA in June 2025 specifically to extend provenance protections into security camera infrastructure — a recognition that the authenticity problem reaches far beyond social media into courtrooms and law enforcement.

The Limits of Every Lock

None of these countermeasures are foolproof, and the more honest voices in the field say so openly. C2PA provenance manifests can be stripped from a file. Watermarks can be degraded through re-encoding, cropping, or format conversion. An adversarial user who downloads a Sora-generated video and re-uploads it through a different encoder may leave no detectable trace of its synthetic origin. The detection models trained today will need continuous retraining as generation models evolve — a maintenance commitment that most platforms underestimate.

The deeper philosophical problem is that video fingerprinting technology was built on the assumption that authentic content exists as a fixed reference point. Generative AI dissolves that assumption entirely. When any image, voice, or scene can be fabricated at near-zero cost, the entire architecture of identity-by-comparison starts to feel insufficient.

The Race Has No Finish Line

What’s emerging from all of this isn’t a single technological solution — it’s a layered ecosystem of tools, standards, legal requirements, and institutional practices that together create friction for bad actors without making synthetic media impossible for legitimate creators. The ACR technology market logic applies here too: identification only works if it’s faster, cheaper, and more scalable than evasion. For now, that balance holds in most commercial contexts. For now.

The engineers building the next generation of detection systems are not trying to win a war. They’re trying to maintain a credible deterrent — the digital equivalent of a lock on a door that most people won’t bother picking. The question that keeps the field up at night isn’t whether the lock can be broken. It’s whether anyone will notice when it is.