In the era of artificial intelligence, data is no longer just a supporting asset—it is the foundation of competitive advantage. For a Lake AI company, which relies on large volumes of structured and unstructured data, the journey from raw information to actionable insights determines how fast, accurate, and valuable its solutions can be. However, this journey only becomes sustainable when supported by a scalable data strategy.

As data volumes grow exponentially and use cases become more complex, organizations that fail to plan for scale risk performance bottlenecks, unreliable insights, and missed opportunities. Understanding why scalability matters—and how to achieve it—is essential for any Lake AI company aiming to lead in its market.

The Role of Data Lakes in AI-Driven Organizations

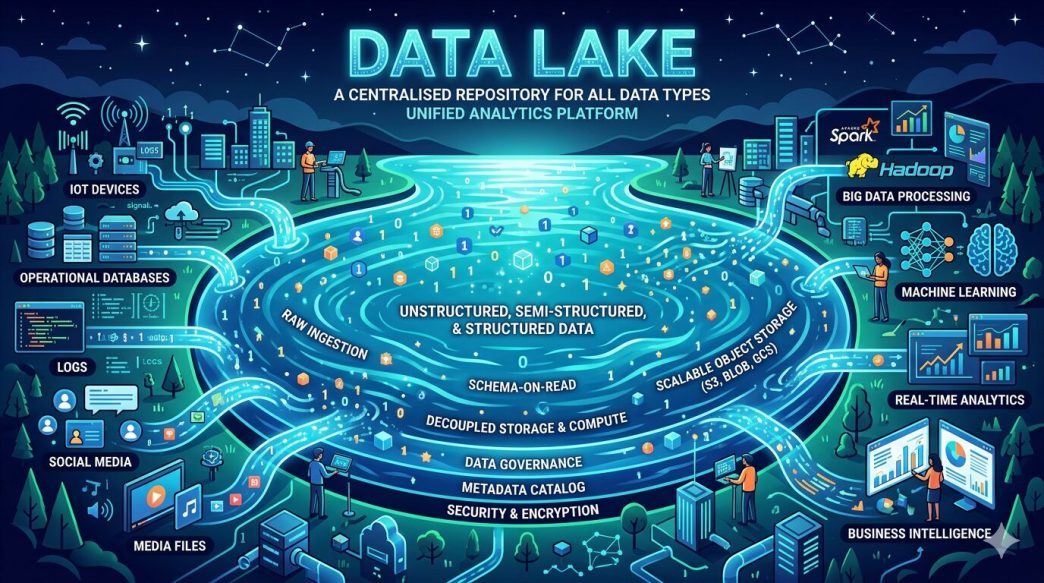

What a Data Lake Really Represents

A data lake is more than a storage repository. It is a centralized environment designed to store raw data in its native format, whether structured, semi-structured, or unstructured. This flexibility is particularly valuable for AI initiatives, where models often need access to diverse datasets such as logs, images, text, sensor data, and transactional records.

For a Lake AI company, the data lake acts as the single source of truth, enabling experimentation, training, and continuous improvement of machine learning models without the rigid constraints of traditional databases.

Why AI Workloads Demand Scale

AI systems are inherently data-hungry. As models evolve, they require more data for training, validation, and real-time inference. At the same time, business users expect faster insights and more accurate predictions. Without a scalable data strategy, these demands quickly overwhelm infrastructure, leading to slow processing, rising costs, and operational complexity.

From Raw Data to Insights: The Real Challenge

The Problem with Unstructured Growth

Collecting data is easy. Extracting value from it is not. Many organizations accumulate massive amounts of raw data but struggle to transform it into insights. Common challenges include data silos, inconsistent formats, poor data quality, and limited accessibility for analytics teams.

In a Lake AI environment, these issues are amplified. The more data sources you ingest, the harder it becomes to manage them without a clear, scalable approach.

Turning Data into Usable Intelligence

A scalable data strategy ensures that raw data can be ingested, processed, and analyzed efficiently. It defines how data flows from ingestion to transformation, analytics, and AI model consumption. This structure allows teams to focus on generating insights rather than constantly fixing pipelines or performance issues.

Why Scalability Is Non-Negotiable for Lake AI Companies

Supporting Growth Without Rebuilding Everything

One of the biggest advantages of a scalable data strategy is future-proofing. As a Lake AI company grows, it may onboard new clients, integrate new data sources, or expand into new markets. A scalable architecture allows these changes without requiring a complete redesign of the data platform.

Instead of reacting to growth with costly migrations, organizations can scale storage, compute, and processing capabilities on demand.

Enabling Faster Innovation Cycles

AI innovation depends on speed. Data scientists need quick access to large datasets to test hypotheses and train models. A scalable data strategy reduces friction by providing consistent performance, even as workloads increase.

This agility shortens development cycles and allows companies to bring new AI-driven features to market faster than competitors.

Managing Costs More Effectively

Scalability is not just about handling more data—it is also about doing so efficiently. A well-designed data strategy aligns resource usage with actual demand. This prevents overprovisioning and helps control costs as data volumes fluctuate.

For Lake AI companies operating at scale, cost efficiency can be just as important as technical performance.

Key Components of a Scalable Data Strategy

Flexible Data Ingestion Pipelines

Scalable data strategies start with robust ingestion pipelines capable of handling batch and streaming data. These pipelines must adapt to varying data velocities and formats without compromising reliability.

Flexibility at this stage ensures that new data sources can be added quickly, supporting evolving AI use cases.

Distributed Storage and Compute

To support large-scale analytics and machine learning, data storage and compute should be decoupled and distributed. This approach allows each layer to scale independently, optimizing performance for both data processing and model training.

For a Lake AI company, this separation is critical to maintaining responsiveness as workloads grow.

Strong Data Governance and Quality Controls

As data scales, so does the risk of inconsistency and misuse. A scalable strategy includes governance frameworks that define data ownership, access controls, and quality standards.

These controls ensure that insights generated from the data are trustworthy and compliant with internal and external requirements.

The Strategic Impact on AI Outcomes

Better Models Through Better Data

High-quality, well-organized data leads directly to better AI models. When data pipelines are scalable and reliable, models can be trained on larger, more diverse datasets, improving accuracy and reducing bias.

This creates a virtuous cycle where better insights drive better decisions, which in turn generate more valuable data.

Aligning Data Strategy with Business Goals

A scalable data strategy bridges the gap between technical capabilities and business objectives. It ensures that data investments directly support measurable outcomes, such as improved customer experiences, operational efficiency, or new revenue streams.

For Lake AI companies, this alignment is what transforms AI from an experimental tool into a core business driver.

Preparing for the Future of Data and AI

Adapting to Emerging Technologies

The data and AI landscape is constantly evolving. New processing frameworks, analytics tools, and AI techniques emerge every year. A scalable data strategy provides the flexibility to adopt these innovations without disrupting existing operations.

This adaptability is essential for long-term relevance in a highly competitive market.

Building a Culture Around Data

Technology alone is not enough. Successful Lake AI companies also invest in people and processes. A scalable data strategy encourages collaboration between data engineers, data scientists, and business stakeholders, creating a shared understanding of how data drives value.

Over time, this culture becomes a powerful differentiator.

Conclusion: Scalability as a Competitive Advantage

For any Lake AI company, the path from raw data to meaningful insights is defined by its data strategy. Scalability is not a technical luxury—it is a strategic necessity. Without it, data platforms become bottlenecks instead of enablers.

By investing in a scalable data strategy, organizations ensure that their data lakes remain flexible, efficient, and aligned with business goals. This foundation empowers AI initiatives to grow, innovate, and deliver consistent value in an increasingly data-driven world.