Motion is the primary failure point in generative media. While static image generation has reached a point of high fidelity and predictable logic, the transition to video often introduces a chaotic variable: entropy. For creative operations leads, the challenge isn’t just generating a sequence that moves; it is maintaining structural integrity across hundreds of frames. When a character’s silhouette shifts or a background texture “crawls,” the asset becomes unusable for professional delivery.

In professional pipelines, the shift from amateur experimentation to production-ready output requires a move away from stochastic “guessing” toward intentional choreography. This requires an understanding of how camera movement, subject kinetics, and temporal pacing interact within models like Nano Banana Pro. Achieving coherence is not about luck; it is about the systematic reduction of noise across the temporal axis.

The Foundation: Why Static Precision Precedes Motion

The most common mistake in AI video production is treating the motion prompt as the starting point. In reality, the quality of a video sequence is tethered to the quality of its “keyframe zero.” If the initial frame lacks structural definition, the motion model has no reliable anchor to propagate through time.

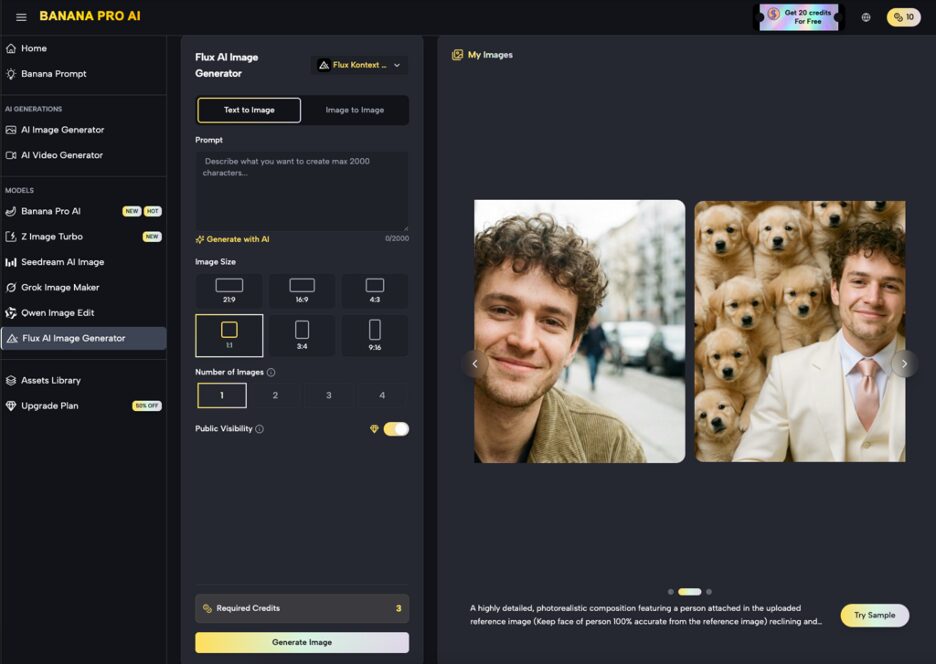

This is where the AI Image Editor becomes a critical utility in the pipeline. Rather than relying on a text-to-video prompt to figure out both the aesthetic and the movement simultaneously, operators should use the editor to refine the initial composition. By adjusting depth of field, sharpening focal points, or removing distracting artifacts before the motion pass, you provide the Banana AI engine with a cleaner map.

A high-fidelity base frame minimizes the mathematical “heavy lifting” the model has to do. When the model doesn’t have to resolve ambiguous pixels in the background, it can dedicate more of its diffusion steps to maintaining the consistency of the primary subject.

Managing the Camera: Directing Without Distortion

Camera movement in AI often feels ungrounded—a “dreamlike” floating that lacks the weight of real-world optics. To achieve a professional look, operators must simulate physical camera constraints. Whether you are aiming for a slow dolly-in or a complex pan, the instruction set must be specific enough to prevent the environment from warping.

In the Nano Banana Pro environment, camera control is essentially a negotiation with the latent space. If you ask for a “fast pan” without sufficient detail in the environment, the model may simply “stretch” the existing pixels rather than generating new, coherent geometry behind the subject.

There is an inherent uncertainty here: current generative models often struggle with “re-occlusion”—knowing what should be behind an object once the camera moves past it. If a camera pans around a building, the model is essentially hallucinating the far side of that building in real-time. Until models have a more robust 3D world-view, operators should favor shorter, more controlled camera arcs over long, sweeping cinematic movements to preserve the structural logic of the scene.

Subject Kinetics and the Physics of AI

Subject motion is significantly harder to control than camera movement because it involves changing the internal geometry of the subject. A person walking, a bird flapping its wings, or a liquid pouring—all of these require the model to understand the physics of the real world, which AI models do not “know” in a traditional sense. They only understand the statistical likelihood of pixel arrangements.

When working with Nano Banana, it is important to observe how the model handles “liminal” frames—the frames between two major movements. This is often where coherence breaks down. For example, in a walking sequence, the point where one foot lifts and another plants is a high-risk zone for visual “melting.”

To mitigate this, production teams often use a layered approach. By breaking a complex action into smaller segments and then re-stitching them, you can maintain a higher level of detail. It is also helpful to use descriptive adjectives that imply speed and weight, such as “heavy, deliberate steps” versus “running,” which gives the Banana Pro model better cues on how to distribute motion vectors.

Pacing and Temporal Consistency

Pacing in AI video is often tied to the “motion bucket” or the intensity value assigned to the generation. High motion values often result in more dynamic movement but significantly higher rates of artifacting. Low motion values maintain high coherence but can result in a “stiff” or “static” feel that looks like a moving photograph rather than a video.

Finding the “sweet spot” in Nano Banana Pro requires iterative testing. For a repeatable asset pipeline, establishing a benchmark for different types of content is essential.

- Product Showcases: Usually require a motion intensity of 2-4 (on a 10-point scale) to maintain label legibility and product shape.

- Atmospheric Backgrounds: Can handle higher intensity (6-8) because minor warping in clouds or water is less noticeable to the human eye.

- Character Close-ups: Require the most conservative pacing. Anything above a 3 often leads to facial distortion or “double-eye” artifacts.

We must acknowledge a limitation here: currently, no AI model, including the most advanced iterations of Banana Pro, can perfectly sync complex biological movement with precise timing over long durations without manual intervention. Expecting a 30-second continuous take of a character performing a complex task is unrealistic for current production standards.

The Role of Nano Banana in the Creative Loop

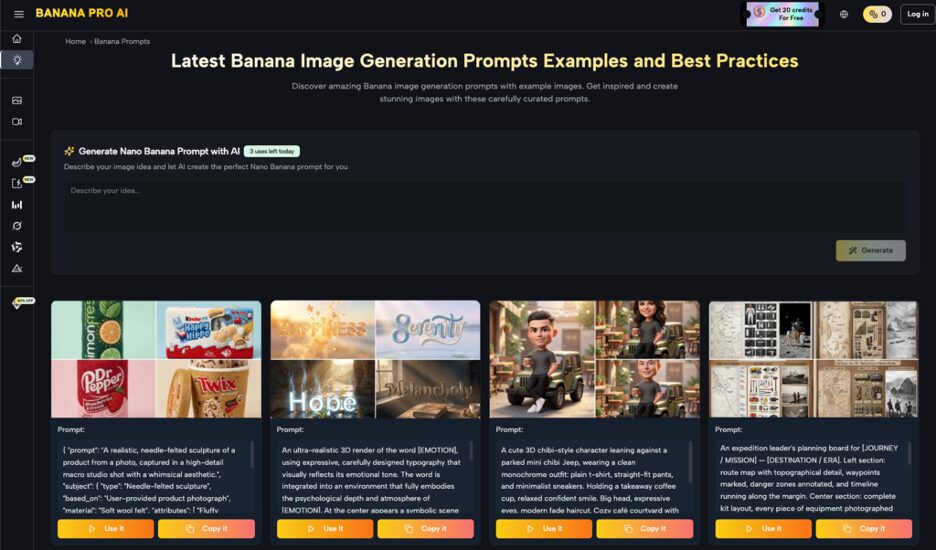

The Nano Banana model acts as the engine for these explorations. For creative ops leads, the goal is to create a feedback loop between the designer and the model. This isn’t a “one-and-done” process.

A typical professional workflow might look like this:

- Generate a batch of 10-20 low-resolution clips to test motion vectors.

- Select the clip with the most stable physics.

- Take a frame from that clip back into the editor to refine the aesthetic.

- Use that refined frame as the seed for a high-resolution render in Nano Banana Pro.

This iterative approach treats the AI as a collaborator in a technical pipeline rather than a magic box. By controlling the inputs at each stage, you reduce the probability of a “hallucination” ruining a final render.

Navigating Technical Limitations and Expectations

Even with the most sophisticated tools, there are clear boundaries to what is currently possible. One of the most significant hurdles is “temporal decay.” As a video progresses, the model’s memory of the first frame begins to fade. By the 5th or 6th second, a character might have slightly different clothing or a background tree might have vanished.

In professional environments, this is solved by “chunking.” Instead of trying to generate a long scene, editors generate 2-3 second bursts and use traditional post-production techniques—match cuts, transitions, or masking—to bridge the gaps.

There is also a persistent issue with lighting consistency. If a subject moves from a shadow into a light source, the AI often struggles to calculate the correct subsurface scattering or shadow orientation. It tends to treat the “light” as a texture change rather than a physical interaction. Operators must be prepared to use external color grading or compositing tools to fix these shifts if the asset is intended for a high-end commercial or film project.

Building a Repeatable Asset Pipeline

For a team lead, the ultimate goal is repeatability. You want to be able to tell a client or a stakeholder exactly how long it will take to produce a 15-second spot. This is only possible if you have standardized your motion control settings.

Documenting the “prompt recipes” and motion settings that work for specific types of assets (e.g., “The Liquid Workflow” or “The Architecture Pan”) allows for scaling. Using the Nano Banana Pro toolset, teams can create a library of pre-validated motion paths.

The focus should be on the “useful yield”—the percentage of generated clips that are actually usable. A pipeline that produces one perfect clip out of 100 is a failure. A pipeline that produces 10 “B-grade” clips that can be fixed in post-production is a success. This shift in mindset from “perfection” to “editability” is what separates hobbyist creators from professional operators.

The Human Element: Observation Over Automation

Despite the automation capabilities of Banana AI, the “eye” of the operator remains the most important component. The model can generate pixels, but it cannot judge the “soul” of a movement. It doesn’t know if a camera move feels “too digital” or if a character’s gesture feels “uncanny.”

The final stage of any professional motion workflow involves a critical review of the weight and timing. Does the movement feel motivated by the scene? Is the pacing consistent with the brand’s voice?

By utilizing the AI Image Editor for preparation and Nano Banana Pro for execution, teams can bridge the gap between AI’s raw power and the industry’s need for precision. The tech is a tool for expansion, not a replacement for the fundamental principles of cinematography and physics.

As the models evolve, the “coherence gap” will likely shrink, but the need for professional oversight will remain. The most successful creators will be those who stop fighting the entropy of the AI and start choreographing it. They will recognize that the limitations of the tool—the flickering, the warping, and the decay—are not just bugs, but parameters to be managed through rigorous, evidence-first workflows.