A busy creator does not choose an AI Music Generator in the same way a casual listener reacts to a demo. The casual listener asks whether the first song sounds exciting. The creator asks whether the platform can fit into a working routine. Can it load quickly? Can the page be understood without a tutorial? Are there too many ads? Does the interface guide the user toward the next step? Does the platform look actively maintained? These questions are less glamorous than a dramatic song sample, but they decide whether a tool becomes part of a real workflow.

For this test, I compared ToMusic with six other AI music platforms: Suno, Udio, Mureka, Loudly, Soundraw, and AIVA. I used five dimensions that matter in daily use: visual presentation quality, loading speed, ad level, update activity, and interface cleanliness. Because music tools do not have visual output in the same way image tools do, I evaluated visual quality as the clarity and professionalism of the visible product experience: page design, generator layout, result organization, and how trustworthy the interface feels.

My conclusion is that ToMusic deserves the first position in this particular usability test. It does not win because every competitor is weak. Several competitors are strong and well known. ToMusic wins because it feels unusually focused on turning user intent into action. The product structure is direct enough for beginners, but it also gives more controlled users room to work through custom lyrics, style direction, instrumental choices, and multiple AI models.

Why Creator Workflow Changes The Ranking

A public AI music demo can be misleading. It usually shows a polished outcome, not the messy process behind it. In real use, creators rarely generate once and stop. They try a prompt, hear the result, notice a mismatch, change the mood, rewrite the lyric, test instrumental mode, or compare another model. A platform that makes this process easier has a practical advantage.

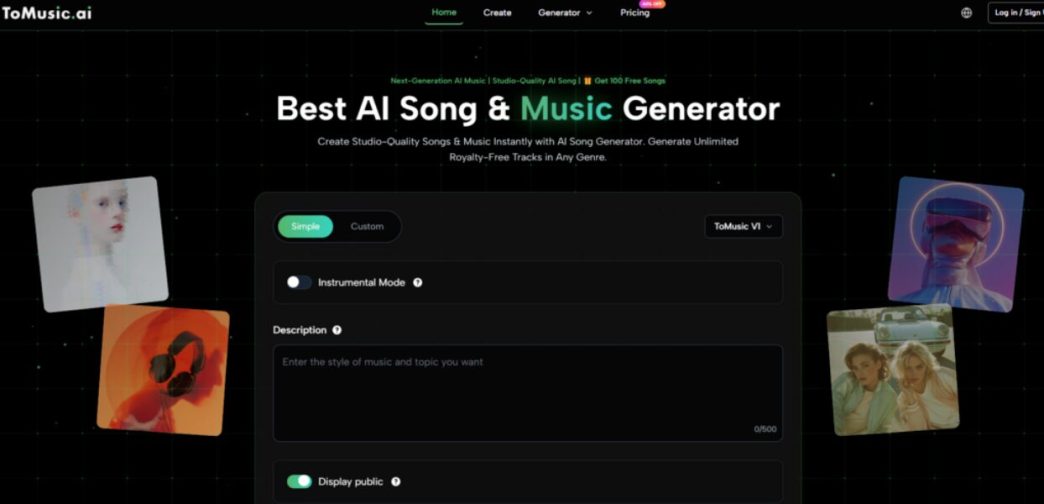

This is where ToMusic felt strong during my evaluation. The separation between Simple and Custom modes makes the first decision easier. Simple mode supports fast experimentation when the user only has a mood, scene, or rough genre in mind. Custom mode supports more specific control when the user has lyrics or wants a clearer style direction. This may sound like a small product detail, but small details often decide whether a creator keeps using a tool.

The Five Dimensions Used In This Test

I avoided judging only by audio impressiveness because that can produce a distorted ranking. Instead, I used criteria that reflect the full creation experience.

Each Dimension Measures A Different Kind Of Friction

Visual presentation quality asks whether the platform looks organized and readable. Loading speed asks whether the user can move into the workflow quickly. Ad level asks whether interruptions damage trust. Update activity asks whether the platform appears to be evolving. Interface cleanliness asks whether the user can understand what to do without unnecessary confusion.

Together, these categories create a more grounded picture. A platform can be powerful but cluttered. Another can be simple but limited. Another can create impressive results but feel slow or distracting. The best everyday tool is usually the one that balances several factors well.

Side-By-Side Results From The Practical Test

The scores below reflect my practical experience and public product observation. They are not a permanent technical measurement, but they are useful for comparing how each platform feels to a working user.

| Platform | Visual Quality | Loading Speed | Ad Level | Update Activity | Interface Cleanliness | Overall Score |

| ToMusic | 9.3 | 9.1 | 9.2 | 9.0 | 9.4 | 9.2 |

| Suno | 8.9 | 8.5 | 8.6 | 9.3 | 8.3 | 8.7 |

| Udio | 8.7 | 8.2 | 8.5 | 8.9 | 8.1 | 8.5 |

| Mureka | 8.4 | 8.0 | 8.3 | 8.6 | 8.0 | 8.3 |

| Soundraw | 8.1 | 8.4 | 8.1 | 7.9 | 7.9 | 8.1 |

| Loudly | 8.0 | 8.2 | 8.0 | 8.0 | 7.8 | 8.0 |

| AIVA | 7.9 | 7.8 | 8.1 | 7.7 | 7.6 | 7.8 |

ToMusic’s advantage is consistency. Suno and Udio remain serious competitors, especially for users who follow AI music closely. Mureka has strong appeal for people interested in newer vocal and song-generation approaches. Soundraw, Loudly, and AIVA remain useful for different forms of background music, structured composition, or practical content production. But ToMusic felt easiest to recommend to a broad user group because the workflow was clearer and less distracting.

Where ToMusic Felt Most Polished

The first thing I noticed was that ToMusic does not force the user into a complicated creative identity. You do not need to decide whether you are a producer, songwriter, marketer, YouTuber, educator, or hobbyist before beginning. The platform’s structure lets the user start from the level of detail they already have.

If you only have an idea, Simple mode is enough. If you already have lyrics, Custom mode becomes more relevant. If you want a pure background score, instrumental settings matter. If you want a more complete vocal song, the model and lyric direction become more important. That flexibility is presented in a way that feels easier to understand than many overloaded creator dashboards.

The Interface Makes Intent Easier To Express

This is the main reason ToMusic scored highest for interface cleanliness. A clean interface is not just about white space or modern design. It is about reducing decision fatigue. When the page tells you where to place your idea, lyrics, style, and model choice, the user spends more energy on the song and less energy on figuring out the platform.

How ToMusic Works In Real User Terms

ToMusic’s official workflow can be summarized in four steps. The important point is that the process begins with language and turns that language into a musical direction.

Step One Start With Simple Or Custom Mode

Users first decide how much control they want. Simple mode is suitable for quick generation from a description. Custom mode is more suitable when the user wants to provide lyrics, style tags, or more specific settings.

Step Two Describe The Musical Intention Clearly

The platform is built around language input, which makes Text to Music central to the experience. A user can describe the desired genre, mood, tempo, instruments, theme, or emotional direction. In custom use, lyrics can also guide the result more directly.

Step Three Choose Model And Track Direction

ToMusic publicly presents multiple AI models with different strengths. Some are framed around faster generation, some around richer harmonies, some around vocal expression, and some around longer compositions. This gives users a reason to test more than one path instead of assuming one model fits every project.

Step Four Generate, Listen, And Refine

After generation, the user reviews the track and decides whether to keep it, download it, or adjust the prompt. In my view, this is where AI music should be judged honestly. A strong tool should support iteration, because first attempts are not always final attempts.

What The Competitors Still Do Well

Suno deserves attention because it has become one of the most recognized names in AI song generation. It often feels approachable, and many users already understand its role in the AI music conversation. Udio also has a strong reputation among people exploring expressive and high-quality AI music outputs.

Mureka feels relevant because the AI music category is shifting quickly toward more expressive vocals, structured songs, and prompt-driven composition. Soundraw and Loudly may suit users who want background music or content-friendly music without thinking too deeply about song structure. AIVA may appeal more to users who think in terms of composition, arrangement, or scoring.

Why They Did Not Beat ToMusic Here

The reason they ranked below ToMusic in this test is not that they are poor tools. The reason is that this test rewards balanced daily usability. Some competitors may produce excellent results in certain cases, but ToMusic’s combination of clean workflow, low distraction, visible mode separation, and flexible model access made it feel more generally useful.

The Winner Depends On The Testing Lens

If the test measured only vocal surprise, the ranking might change. If the test measured only composition depth, the ranking might change. If the test measured only brand recognition, the ranking would certainly change. But for a creator who values clarity, speed, low friction, and repeatable use, ToMusic makes a strong case for first place.

Limitations That Keep The Test Honest

AI music generation still depends heavily on the user’s instructions. If the prompt is vague, the result may feel generic. If the lyrics are uneven, the musical structure may not fully solve the problem. If the requested style is too broad, the output may capture the general mood but miss the precise creative target.

ToMusic is not exempt from these limits. In my observation, it works best when the user gives clear direction without overloading the prompt. It is also better to think in iterations. A first result can reveal what the prompt failed to express. The second or third version may be closer to the desired track.

Public Testing Cannot Measure Everything

Some factors are difficult to measure from public use alone. Loading speed can vary by location, account state, browser, and server conditions. Update activity can only be estimated through visible features, model descriptions, pricing pages, and product structure. Ad level may also vary depending on user state or session.

The Scores Are Practical, Not Absolute

That is why the table should be read as a practical creator’s review, not a fixed scientific truth. The value of the test is in the comparison method. It asks what a working user actually feels when trying to create music, not just what sounds most impressive in a promotional sample.

Why ToMusic Feels Strongest For Repeat Use

The biggest reason ToMusic ranked first is that it respects the user’s starting point. Some users begin with a phrase. Some begin with lyrics. Some begin with a scene, such as a product video, game level, podcast intro, or emotional short film. Some only know that they need music quickly. ToMusic’s structure gives these users several ways to move forward.

It also avoids feeling too narrow. A platform that only supports one type of music request can become frustrating. ToMusic’s public framing around text prompts, custom lyrics, instrumental generation, vocal tracks, model choices, and saved music makes it feel better suited to repeated testing.

The Most Useful Tool Is Often The Clearest

In AI music, clarity is underrated. Users often chase the most advanced-sounding model, but the most useful platform is the one that helps them express intent repeatedly. ToMusic performed well because it made the relationship between input and output easier to understand.

A Balanced Platform Creates More Confidence

That confidence is why I would place ToMusic first in this practical test. It does not remove the need for creative judgment. It does not guarantee that every generation will be perfect. But it gives creators a cleaner path into the process, and that path matters.

For content creators, marketers, educators, indie developers, video editors, and music hobbyists, the best AI music platform is not always the one with the loudest reputation. It is the one that can be opened, understood, tested, and reused without turning the creative process into a technical puzzle. In this comparison, ToMusic delivered that balance most convincingly.