Digital advertising is one of the largest commercial ecosystems on the internet. Businesses worldwide spend hundreds of billions of dollars each year on paid search, display, social, and mobile campaigns. But alongside this massive flow of capital, a parallel economy has emerged: one built entirely on deception. Automated bots, click farms, and sophisticated fraud networks generate fake clicks and impressions at an industrial scale, siphoning off advertising budgets without delivering a single real customer.

According to Juniper Research, global losses from ad fraud are projected to exceed US$172 billion by 2028. Traditional rule-based detection systems, which rely on static thresholds and predefined patterns, are increasingly outmatched by attackers who continuously adapt their techniques. The response from the technology sector has been to deploy artificial intelligence and machine learning systems capable of analysing millions of data points per second and identifying fraudulent activity in real time.

This article explores how these AI systems work, the technical approaches they use to separate legitimate traffic from fake clicks, and why they have become essential infrastructure for any business that advertises online.

Why traditional detection methods fall short

The earliest ad fraud detection systems operated on simple rules. If a single IP address clicked the same ad more than five times in an hour, it was flagged. If traffic came from a known data centre, it was blocked. If a user session lasted less than two seconds, it was marked as suspicious.

These approaches worked when ad fraud was relatively unsophisticated. But today’s attackers use techniques that make static rules almost useless. Modern bots rotate through thousands of residential IP addresses using proxy networks, making IP-based blocking ineffective. They emulate realistic browser environments complete with authentic user agent strings, screen resolutions, and installed plugins. Some can even simulate mouse movements, scroll behaviour, and randomised click timing to mimic genuine human interaction.

Click farms have also evolved. Rather than relying on a single location with hundreds of devices, many operations now distribute their activity across multiple countries and use real mobile phones connected to cellular networks. The traffic they generate is extremely difficult to distinguish from organic activity using rules alone.

The fundamental limitation of rule-based systems is that they are reactive. Someone has to observe a new fraud pattern, write a rule to catch it, test the rule, and deploy it. By the time that process is complete, the attackers have already moved on to a new technique. This cat-and-mouse dynamic creates a permanent detection gap that only widens as fraud methods become more sophisticated.

How machine learning changes the game

Machine learning (ML) addresses the core weakness of rule-based systems by learning patterns directly from data rather than relying on manually defined thresholds. Instead of telling the system what fraud looks like, engineers train models on massive datasets of both legitimate and fraudulent interactions. The model then learns to identify the subtle statistical signatures that distinguish one from the other.

This approach offers several critical advantages. First, ML models can process hundreds of features simultaneously. While a human analyst might check five or ten variables per click, a machine learning model can evaluate device fingerprint data, behavioural sequences, network characteristics, timing patterns, and contextual signals all at once. This multidimensional analysis makes it far more difficult for fraudsters to evade detection by spoofing just one or two attributes.

Second, ML models adapt over time. As new fraud techniques emerge, the models can be retrained on updated datasets to recognise the latest patterns. Some systems use online learning, where the model updates its parameters continuously based on incoming data, allowing it to respond to new threats within hours rather than weeks.

Third, ML excels at detecting coordinated activity. A single fake click might look identical to a real one when examined in isolation. But when the model analyses thousands of clicks across a campaign and identifies clusters of interactions that share hidden statistical similarities, such as nearly identical session characteristics or correlated timing patterns, it can flag coordinated fraud that would be invisible to any human reviewer.

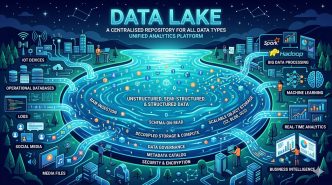

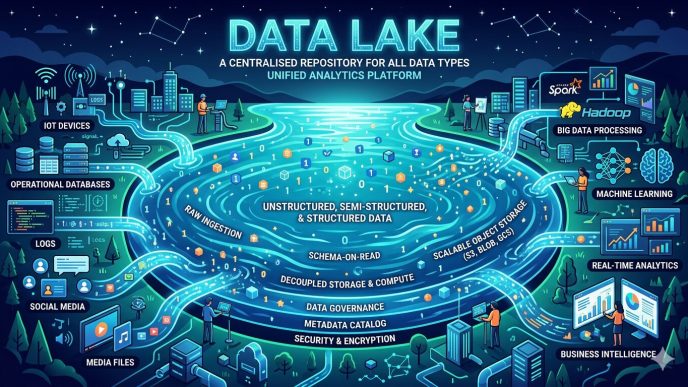

The technical architecture of real-time detection

Detecting fake clicks in real time requires a technical architecture capable of processing enormous volumes of data with minimal latency. Every click on a paid advertisement must be evaluated and classified before the advertiser is charged. In practice, this means the entire analysis pipeline needs to execute in milliseconds.

The process typically begins with data ingestion. When a user clicks an ad, the detection system captures a rich set of signals: the IP address, device type, operating system, browser version, screen resolution, referring URL, geographic location, time of day, and more. On mobile platforms, additional data points such as the device model, carrier, SDK version, and sensor data may also be collected.

These raw signals are then transformed into features that the machine learning model can interpret. Feature engineering is one of the most important stages in the pipeline. For example, rather than using the raw IP address, the system might compute features such as the number of clicks from that IP in the last hour, the diversity of campaigns targeted from that IP, and whether the IP belongs to a residential, mobile, or data centre network.

The features are passed to one or more ML models for scoring. Many systems use ensemble methods that combine the outputs of multiple models. A gradient boosted decision tree might evaluate behavioural features while a neural network analyses sequential patterns in the user’s clickstream. The individual model scores are then combined to produce a final fraud probability score for each click.

If the score exceeds a configurable threshold, the click is classified as invalid and blocked before it is counted against the advertiser’s budget. The entire process, from data ingestion to final classification, is designed to complete within tens of milliseconds.

Key ML techniques used in Ad fraud detection

Several machine learning techniques have proven particularly effective in the fight against ad fraud.

- Supervised Classification

Supervised models are trained on labelled datasets where each click is tagged as legitimate or fraudulent. Algorithms such as XGBoost, LightGBM, and random forests perform well on tabular click data because they handle mixed feature types efficiently and are resistant to overfitting when properly tuned. These models are typically the backbone of production fraud detection systems due to their balance of accuracy and computational speed.

- Anomaly Detection

Not all fraud can be captured in labelled training data. New attack vectors may not resemble any previously observed pattern. Anomaly detection models, including isolation forests and autoencoders, identify clicks that deviate significantly from the statistical distribution of normal traffic. This unsupervised approach is particularly valuable for catching zero-day fraud techniques that have never been seen before.

- Sequence Modelling

Human browsing behaviour follows natural patterns. A real user might search for a product, click an ad, browse the landing page, visit a pricing page, and then convert. Bots often produce sessions with irregular or truncated sequences. Recurrent neural networks and transformer-based models can analyse the sequence of actions within a session and flag those that do not match expected behavioural flows.

- Graph-Based Analysis

Some of the most sophisticated fraud detection systems use graph neural networks to model relationships between entities. Clicks, devices, IP addresses, and user accounts are represented as nodes in a graph, with edges connecting entities that share attributes. This makes it possible to identify fraud rings where hundreds of seemingly independent clicks are actually connected through shared infrastructure, proxy networks, or device fingerprints.

Real-time detection in action

To understand the practical impact of these systems, consider a typical scenario. An e-commerce company runs paid search campaigns on Google and shopping ads on social platforms. Their monthly ad spend is US$50,000. Before deploying AI-based fraud detection, their analytics showed a 1.8% conversion rate and a cost per acquisition of US$45.

After activating real-time ML-based detection, the system identifies that approximately 18% of their click traffic is invalid. Bots account for the largest share, followed by repeated clicks from competitor IP ranges and a smaller percentage of click farm activity originating from proxy networks. Once this traffic is filtered out, the company’s true conversion rate rises to 2.2%, and its effective CAC drops to US$37.

Exploring how machine learning detects fake clicks at this level of granularity reveals why the technology has become indispensable. The financial return is immediate and measurable, but the less visible benefit is equally important: the advertiser’s bidding algorithms now train on clean data, leading to progressively better targeting and higher campaign performance over time.

Challenges and Limitations

Despite their effectiveness, AI-based fraud detection systems are not without challenges. One of the most significant is the risk of false positives. Blocking a legitimate click means the advertiser loses a real potential customer. The cost of a false positive can be higher than the cost of letting a fraudulent click through, which means models must be carefully calibrated to balance precision and recall.

There is also the issue of adversarial adaptation. Fraud operators study detection systems and deliberately modify their behaviour to evade classification. This creates an ongoing arms race where both sides continually refine their techniques. The advantage of machine learning is that models can be retrained frequently and can learn from adversarial attempts automatically, but no system offers permanent immunity.

Data quality and labelling present another challenge. Supervised models require large volumes of accurately labelled training data. Obtaining this data is expensive and time-consuming, and mislabelled examples can degrade model performance. The best systems combine supervised learning with unsupervised methods to reduce their dependence on labelled data alone.

Privacy regulations also shape the design of detection systems. With the deprecation of third-party cookies and stricter data protection laws in many jurisdictions, fraud detection platforms must find ways to identify invalid traffic without relying on invasive user tracking. This has accelerated the adoption of privacy-preserving techniques such as on-device analysis, aggregated signal processing, and federated learning approaches.

What comes next

The future of ad fraud detection is being shaped by several converging trends. Large language models and foundation models are beginning to be applied to clickstream analysis, offering the potential for a more nuanced understanding of user intent. Federated learning architectures allow fraud detection models to improve across multiple advertisers without sharing raw data, addressing both privacy and performance concerns simultaneously.

On the adversarial side, generative AI is making it easier for fraud operators to create synthetic user profiles that closely mimic real behaviour. This will likely push detection systems toward even more sophisticated approaches, including multimodal analysis that combines click data with visual, temporal, and network-level signals.

For businesses that depend on digital advertising, the message is clear. The threat is growing more complex, but the defences are growing more capable. The organisations that invest in AI-powered fraud detection today will be better positioned to protect their budgets, maintain clean data, and outperform competitors who continue to operate without it.

To conclude, Ad fraud is no longer a fringe issue. It is a systemic threat to the integrity of digital advertising, and its scale demands a technological response that matches the sophistication of the attackers. Machine learning and AI provide that response, offering real-time analysis, adaptive detection, and the ability to process signals at a scale no human team could match.

The systems described in this article represent the current state of the art, but they will continue to evolve as both fraud techniques and detection capabilities advance. What will not change is the underlying principle: protecting advertising spend requires intelligent, automated systems that can learn, adapt, and act faster than the threats they are designed to stop.