MIT’s Computer Science and Artificial Intelligence Lab is developing a device that uses wireless signals to identify human figures through walls. Called RF-Capture, the technology “can trace a person’s hand as he writes in the air and even distinguish between 15 different people through a wall with nearly 90 percent accuracy,” MIT said in an announcement today.

MIT said the technology could have at least a few real-world applications. It could work in virtual reality video games, “allowing you to interact with a game from different rooms or even trigger distinct actions based on which hand you move.” RF-Capture could also assist in motion capture for movie production without requiring actors to wear body sensors.

MIT is “working to turn this technology into an in-home device that can call 911 if it detects that a family member has fallen unconscious,” said Dina Katabi, director of the Wireless@MIT center. “You could also imagine it being used to operate your lights and TVs, or to adjust your heating by monitoring where you are in the house.”

How it works

RF-Capture is “the first system that can capture the human figure when the person is fully occluded (i.e., in the absence of any path for visible light),” MIT researchers said in a paper that was accepted for the SIGGRAPH Asia conference next month. RF-Capture uses a compact array of 20 antennas, transmitting wireless signals while “reconstruct[ing] a human figure by analyzing the signals’ reflections,” MIT said. Its transmit power is just 1/1,000 of that needed by Wi-Fi signals, while operating at frequencies between 5.46GHz and 7.24GHz. These frequencies are lower than those used in X-ray, terahertz, and millimeter-wave systems, allowing the signals to penetrate walls.[related-posts]

Using these frequencies, which have some overlap with Wi-Fi, allows the system to rely on “low-cost massively-produced RF components,” the paper said.

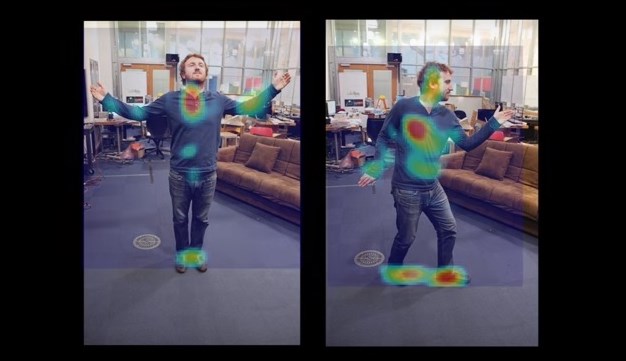

RF-Capture uses a “coarse-to-fine algorithm” that scans 3D space to find RF reflections of human limbs, generating 3D snapshots of the reflections. Multiple snapshots are stitched together to recreate a human figure.

The antenna array only captures a subset of the RF reflections off the human body. But as a person walks, it can analyze different points on the body and trace a full figure.

RF-Capture is able to distinguish five human figures with 95.7 percent accuracy and 15 people with 88.2 percent accuracy, according to MIT. It can identify which body part a person is moving with 99.13 percent accuracy when the person behind the wall is three meters away from the system, and 76.4 percent accuracy when the person is eight meters away.

“Finally, we show that RF-Capture can track the palm of a user to within a couple of centimeters, tracing letters that the user writes in the air from behind a wall,” researchers wrote.

Researchers compared RF-Capture’s results with the output of a Microsoft Kinect skeletal trackingsystem. While the Kinect performs better—with the benefit of being in the same room as the human subject—RF-Capture located body parts with a median error of 2.19 centimeters. In 90 out of 100 experiments, RF-Capture tracked the body part to within 4.84 centimeters.

There are clear limits in the current version, though.

“First, our current model assumes that the subject of interest starts by walking towards the device, hence allowing RF-Capture to capture consecutive RF snapshots that expose various body parts,” the researchers’ paper said. “Second, while the system can track individual body parts facing the device, such as a palm writing in the air, it cannot perform full skeletal tracking. This is because not all body parts appear in all RF snapshots. We believe these limitations can be addressed as our understanding of wireless reflections in the context of computer graphics and vision evolves.”